Excel data analysis templates toolkit

[Ad Space — Insert ad script here]

Downloads

Download the Markdown guide for offline reading or AI assistant context. The ZIP bundles all the Excel templates. Open the templates in Microsoft Excel or any compatible spreadsheet application.

Strategic analysis

3. Strategic analysis overview

Strategic analysis includes all those models and techniques that study the environment in which an organization operates. This environment ranges from external trends (economy, policies, technological innovations, etc.) to the organization (mission, strategy, goals, value chain, etc.) and its business environment (competitors, substitute products, customers, suppliers, etc.).

The objective of a strategic analysis is to investigate ideas and problems either to evaluate the strategic direction of the organization or to identify possible future strategies. I will focus only on the descriptive, predictive, and prescriptive analytical models of strategic analysis, excluding any strategy implementation and follow-up techniques.

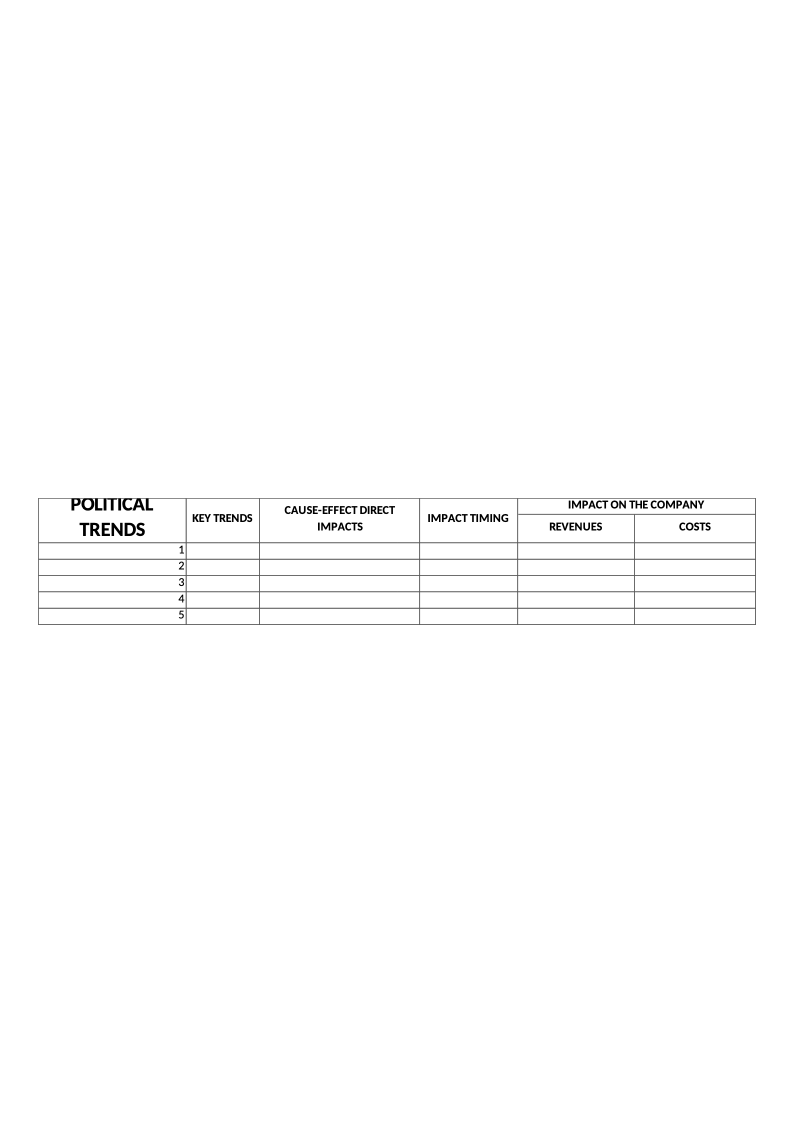

4. ENVIRONMENTAL ANALYSIS (PEST)

OBJECTIVE

Identify external key trends and their impacts on an organization, company, or department.

DESCRIPTION

PEST analysis and all its variations (PESTEL, PESTLIED, STEEPLE, etc.) are used to analyze the key trends that affect the surrounding environment of an organization but on which the organization has no influence. The key idea is to identify the trends that can most affect the company, organization, or department in several areas:

-

POLITICAL

-

ENVIRONMENTAL

-

ECONOMIC

-

SOCIO-CULTURAL

-

TECHNOLOGICAL

-

…

Examples of key trends are an increase in interest rates, a new law, and the increasing use of mobile devices. Many more examples can be found quite easily, but the main idea that I want to transmit is that the division by area is just a way to facilitate the identification of the key trends, as the decision on whether to include a trend in one area or another has little or no effect on the model. This model is commonly used with other tools that complement the analysis of the business environment (competitive analysis, Porter’s five forces analysis, etc.), and it is usually used for SWOT analysis (strengths, weaknesses, opportunities, threats), in which it represents the external factors, namely opportunities and threats.

To avoid using this model as a mere theoretical tool, once the key trends have been identified, it is necessary to define the impacts of each one using cause–effect reasoning and to try to quantify the positive or negative effect that each trend can have on the organization in terms of revenues or costs. Some trends could have an almost direct impact, but others require several hypotheses, estimations, and calculations. Often it is necessary to determine the impact on intermediary indicators before calculating the monetary impact. For example, the growing sensibility for recycling can influence the company’s packaging. In this case we can estimate the loss of reputation first, then the decrease in demand, and finally the impact on revenues. Another important element to be identified is the timing of the impact, since it will affect the actions that will be taken and when they will be implemented.

There are two main methods for gathering data for this model. The first one is to exploit experts’ knowledge of several sectors and areas to identify the key trends affecting the future of the organization. Several techniques can be applied, such as the Delphi method, brainstorming, and think tanks. The second method is more “DIY” and it concerns gathering published forecasts of experts, futurists, organizations, governments, and so on. A common error with both methods is that people tend to jump directly to possible solutions, but doing so worsens the performance of the model. In this phase the focus just needs to be on the trends and on quantifying the impact that they will have on the organization.

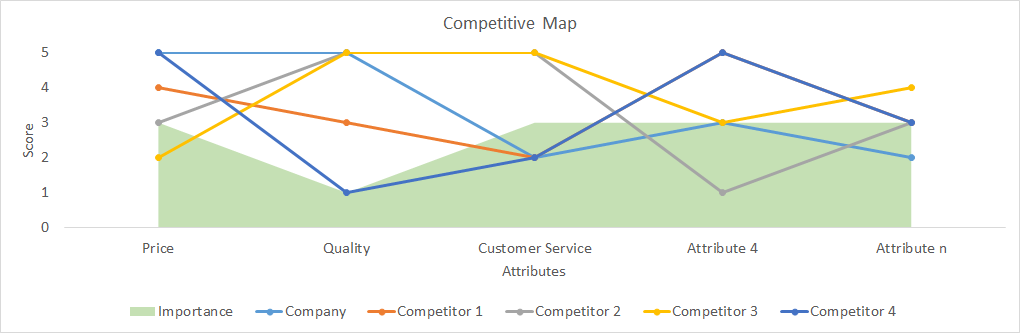

5. COMPETITIVE MAP

OBJECTIVE

Analyze the positioning of a company or a product in comparison with other companies or products by evaluating several attributes.

DESCRIPTION

A competitive map is a tool that compares a company or products with several competitors according to the most important attributes. We can also add to the map the relative importance of each attribute. From this map we can understand how a company is positioned in relation to several attributes and define the key issues for strategic decisions. For example, we can decide to focus on the communication and promotion about the quality of our product if we are well positioned and it is important for customers.

The data for this map are usually gathered through surveys. If the selection of attributes is not clear or the number of attributes is large, we can ask a preselection question whereby interviewees rank the most important attributes. Then they will be asked about the importance and performance of the selected attributes for each company or product. I suggest dividing the question into two parts:

-

Importance: give a score from 1 to 5 for each attribute;

-

Performance: give a score from 1 to 5 for each combination of attribute and company (or product).

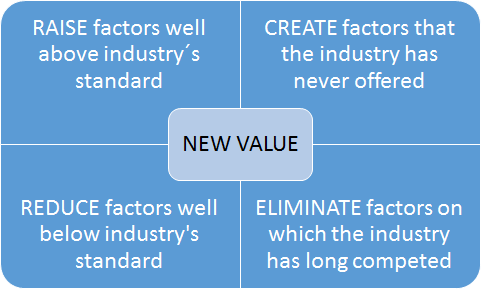

6. BLUE OCEAN MODEL

OBJECTIVE

Identify possible disruptive business ideas.

DESCRIPTION

This method is described in the book Blue Ocean Strategy written by W. Chan Kim and Renée Mauborgne in 2005.[^8] The idea behind it is that companies should not focus on beating competitors (red oceans) but on creating “blue oceans,” meaning new uncontested markets. Accordingly, the competition will be irrelevant and new value will be created for both the company and its customers.

The book offers several tools and approaches to apply a blue ocean strategy systematically (these tools are available on the official website[^9]). One of the proposed tools is an action framework that suggests implementing a blue ocean strategy by modifying, creating, or eliminating factors concerning the product or service offered, for example a significant reduction of prices thanks to a different production methodology or the creation of touchscreens in the mobile industry.

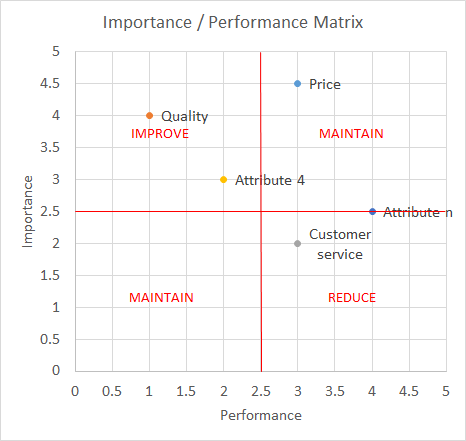

7. IMPORTANCE–PERFORMANCE MATRIX

Decide whether to improve, maintain, or reduce specific company success factors or product attributes.

DESCRIPTION

This model is used to assess the performance of a company on several success factors or the performance of a product on several attributes. It can be a part of the competitive map model presented in this book.

The data for this map are usually gathered through surveys. If the selection of attributes is not clear or the number of attributes is large, we can ask a preselection question whereby interviewees rank the most important attributes. Then the interviewees will be asked to give a score from 1 to 5 for each attribute.

7 IMPORTANCE-PERFORMANCE MATRIX

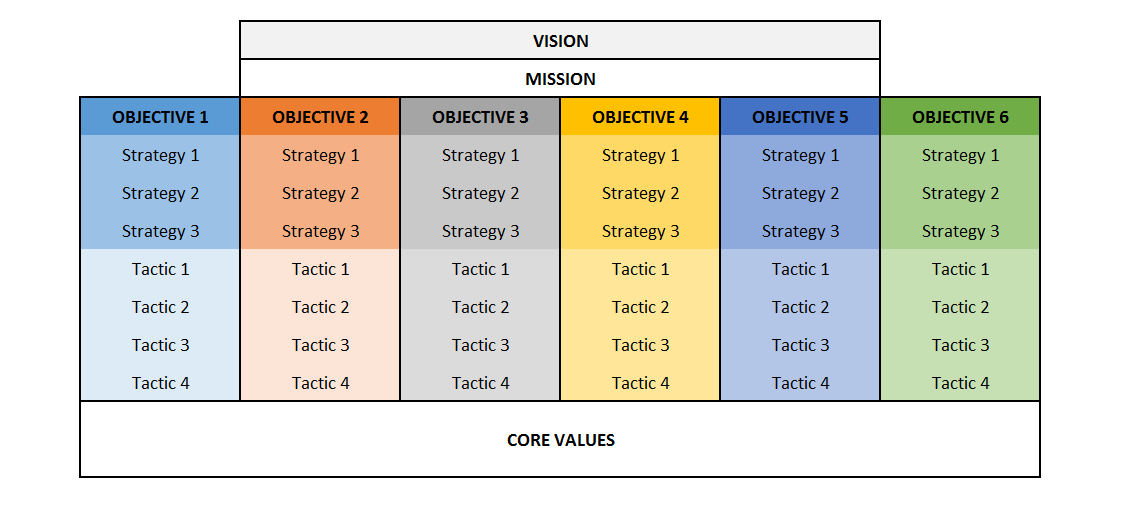

8. VMOST

OBJECTIVE

To describe what the company wants to achieve and how it intends to achieve it.

DESCRIPTION

It is a descriptive analysis tool that enables the reflection or communication of the strategic intent of a company, since it includes:

-

What the company wants to achieve: vision, mission, and objectives

-

How to achieve them: strategies and tactics

In more detail, the components of these tools are:

-

Vision: aspiration for future market-oriented results;

-

Mission: the fundamental purpose of the company: why the company exists;

-

Objectives: the goals that the company intends to reach; all these objectives are aligned with both the mission and the vision;

-

Strategies: long- and medium-term actions to achieve the objectives;

-

Tactics: short-term actions to achieve the objectives.

Usually the vision and mission are not clearly understood, but put simply the vision is “what we want to become,” for example “to be in the top three tech companies in the world,” while the vision is “what we want to do,” that is, why we exist, for example “to create innovative products ….” The vision and mission are often accompanied by a description of the company’s core values, meaning the company’s principals that reflect its culture (e.g. integrity, transparency, and environmental friendliness).

From this analysis we can obtain some of the strengths and weaknesses to include in the SWOT analysis. If the VMOST is well defined and communicated and people are committed to achieving what it states, it is a strength in the SWOT; otherwise, it is a weakness.

The information for the VMOST can already be defined and documented in a company or it can be built through brainstorming, workshops, or interviews with high-level managers and directors.

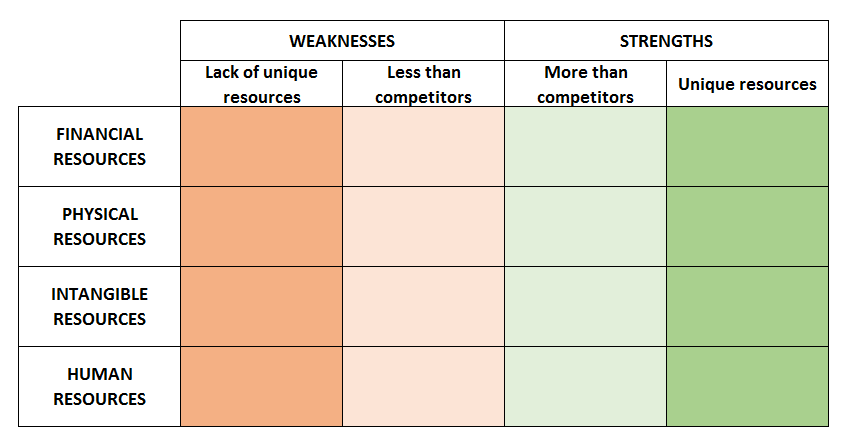

9. RESOURCE AUDIT

OBJECTIVE

Identify the weaknesses and strengths within an organization.

DESCRIPTION

This model identifies the resources available to an organization, which can be divided into:

-

Financial resources (funds, loans, credit …)

-

Physical resources (plants, buildings, machinery …)

-

Intangible resources (reputation, know-how …)

-

Human resources

To define the strengths and weaknesses (which can be used afterwards in a SWOT analysis), we should ask several questions, such as:

-

Are the available resources enough for the current competitive situation?

-

Will they be able to support future developments?

-

How difficult is it to obtain more of these resources for the company? And for its competitors?

-

How difficult is it for a competitor to imitate these resources or core competencies?

-

How important are they for the core business?

Simplifying, we can state that it is a strength for the organization when it possesses unique resources or when it has more or easier access to imitable resources than its competitors.

The identification of strengths and weaknesses is based on qualitative reasoning, but this can be supported by both quantitative data (financial ratios, reputation indexes, employee turnover rate, etc.) and qualitative data (workshops, interviews, brainstorming, etc.).

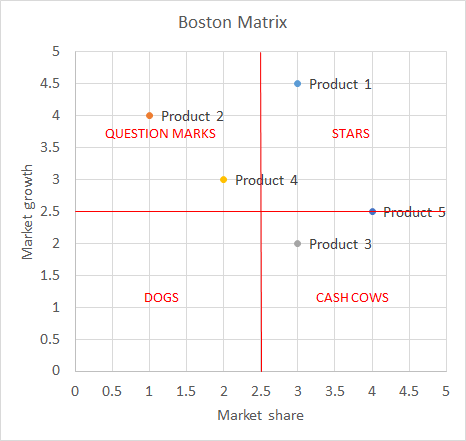

10. BOSTON MATRIX

OBJECTIVE

Decide the best allocation of resources among different products, product lines, or business units.

DESCRIPTION

Created by the Boston Consulting Group, this method aims to decide how to allocate resources depending on the market positioning of different products or services. The market positioning depends on two variables:

-

Market share: more than the simple percentage of the market share, the relative importance depends on the number of competitors, the position according to the market share, and how the company compares to its largest competitor. There is also a big assumption that limits this model: the market share is positively related to earnings and profitability.

-

Market growth: this is the growth rate of a product or service, and it represents not only the prospective growth of earnings but also the attractiveness of a market.

This matrix aids in decision making and is based on the following four categories:

-

Stars: these products maintain the level of investment and strategy and will be cash cows when the market growth declines;

-

Cash cows: these are products with a high market share and low growth; the investment needed is limited;

-

Question marks: it is important to improve the strategy by investing in the right levers to increase the market positioning and sales. The goal is to move these products to the “stars” box;

-

Dogs: here there are two options, either not to invest in these products or to redefine them.

The Boston matrix is considered a useful tool to define strengths and weaknesses in SWOT analysis, but it can also reveal future opportunities for the organization.

The data can usually be gathered from published information concerning industries, sectors, products, or services. If the data are not available, it is possible to estimate the market share by surveying a representative sample of customers.

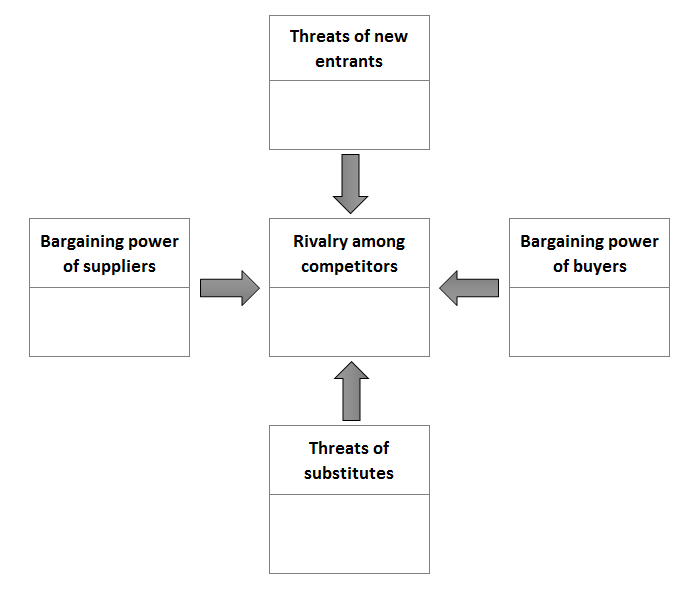

11. PORTER’S FIVE FORCES

OBJECTIVE

Analyze the competitive environment in which the company operates.

DESCRIPTION

This tool is part of the external strategic analysis, and it is useful for completing the SWOT analysis concerning opportunities and threats. While the PESTEL analysis concerns the external environment and macro trends, Porter’s technique focuses on the industry domain.

It is very important before undertaking this analysis to establish the company domain of competition, since this can change the results significantly. The analysis of the competitive environment is undertaken through five categories:

-

Competitors: how strong is the rivalry among competitors?

-

New entrants: how easy is it for new entrants to start competing?

-

Substitutes: how many and how significant are they?

-

Buyers: how many are there and how easily can they change supplier?

-

Suppliers: how many are there and how high are the switching costs for buyers?

The answers to these questions reveal several opportunities or threats for the company; for example, if customers (buyers) have few options and their switching costs are high, this is an opportunity for the company.

The data can be gathered from extensive market research and from industry experts through interviews, workshops, and brainstorming.

12. PRODUCT LIFE CYCLE ANALYSIS

OBJECTIVE

Define the maturity of the industry in which a company is competing or the maturity of a product that it is selling.

DESCRIPTION

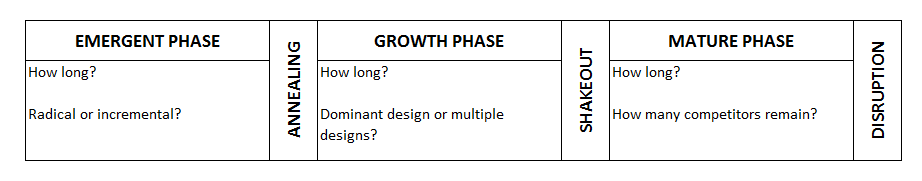

Several studies have been carried out on industries’ life cycles. Industries and products usually start from an emergent phase, pass through a growth phase, and finally reach a mature phase. At this point either they start the cycle again thanks to innovations or they decline.

This is a tool made for reasoning about the maturity of the industry and the maturity of the kind of products being manufactured. To define the phase in which the company is positioned, consider the following:

-

Emergent phase: this is characterized by a small number of firms, low revenues, and usually zero or negative margins;

-

Growth phase: the margins are increasing rapidly (for a while, but less in the last part of the growth phase), as well as the number of firms;

-

Mature phase: the global revenues are increasing at a far slower rate; both the margins and the number of firms are decreasing.

Usually, after the emergent phase, the dominant standards are defined and the rise of one or a few companies is experienced (annealing). Due to rapidly increasing margins, many companies imitate those successful pioneers, and, consequently, the margins start to decrease and a few companies start to leave the market (shakeout). Only the most efficient firms remain in the market during the mature phase, at the end of which we have either decline or disruption thanks to innovation or a demand shift. Finally, the process starts again.

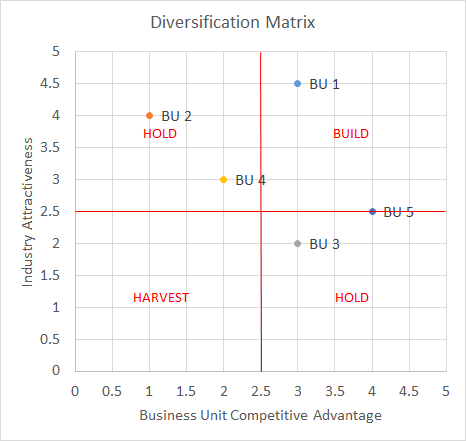

13. DIVERSIFICATION MATRIX (G.E. MATRIX)

OBJECTIVE

Make decisions on diversification strategies.

DESCRIPTION

A firm’s scope can be to broaden by diversifying its products or services, which can be either related or unrelated to the core business. To decide whether to maintain, create, or close specific business units, we must start with a detailed analysis of advantages and disadvantages. A simple matrix is used to explore the main opportunities concerning diversification, which depends on one side on the industry attractiveness of business units (which can be measured by Porter’s five forces analysis) and on the other side on the competitive advantage of the business units.

For business units in the upper-right box, the decision should be to invest more, while for the boxes in which there is either good industry attractiveness or a good competitive advantage, it should be to maintain the current course. Companies should consider closing business units that fall into the lower-left box.

The data are mainly qualitative and usually derived from other analytical tools, for example Porter’s five forces or the industry competitive life cycle for the Y axis (industry attractiveness) and competitive maps or resource audits for the X axis (business unit competitive advantage).

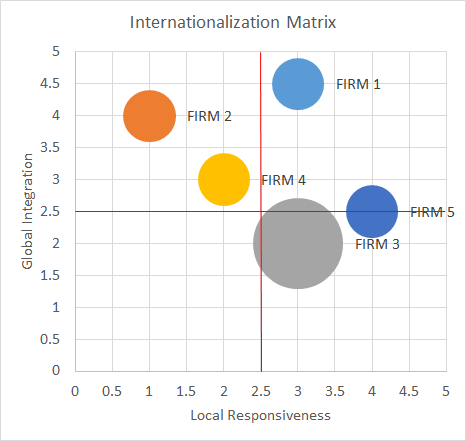

14. INTERNATIONALIZATION MATRIX

OBJECTIVE

Make decisions on internationalization strategies.

DESCRIPTION

An internationalization strategy implies several preliminary analyses to respond to the two main questions: where and how? However, the main trade-off when internationalizing is between the advantages of global integration and the disadvantages of local responsiveness (the need for adaptation).

The matrix with these two indicators can be filled either with different industries (in the case that we want to decide which business unit to internationalize) or with different competitors to identify business opportunities.

With this matrix (in the example of analyzing competitors), it is possible to find opportunities in which competitors are not exploiting the upper-left corner, implement strategies with lower adaptation costs, and obtain better global integration advantages. In addition, opportunities can be found for which the conditions are not optimal for other firms (lower-right corner) and hence there are no competitors there, so it is possible to be profitable. The data for this model are gathered through published articles or studies, industry public data, and experts’ opinions (brainstorming, workshops, etc.).

14 INTERNATIONALIZATION MATRIX

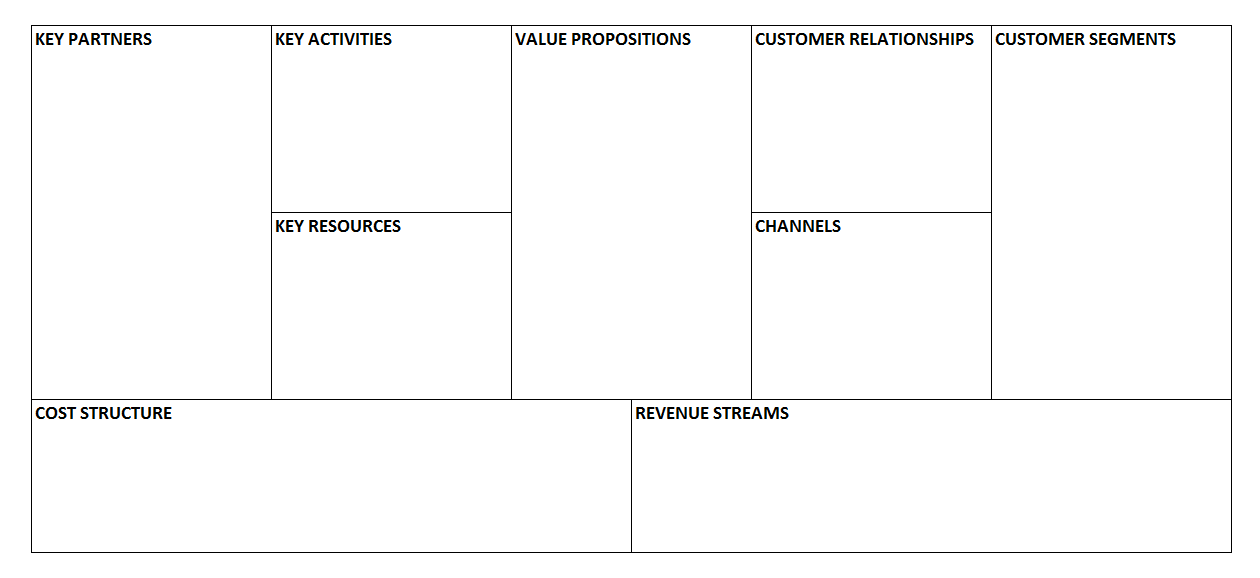

15. BUSINESS MODEL CANVAS

OBJECTIVE

Analyze our own business model or competitors’ business models.[^10]

DESCRIPTION

This tool, invented by Alexander Osterwalder et al.,[^11] can be used to analyze how an organization is creating and delivering value to its customers. Even though its original purpose was to help in creating a new product or business, in this book the business model canvas is presented as an analytical tool, since those kinds of models are not covered. This model can also be useful for understanding whether a company is a competitor or not, since it analyzes the value proposition, which responds to customers’ needs. In fact, companies compete not on products but on the needs that they satisfy or the problems that they solve for their customers.

The study includes the analysis of nine building blocks of the business and how they are related to each other. First we define our customer segments and then the value propositions that we are offering to them. The value proposition is a need that we satisfy or a problem that we solve for a specific customer segment. It is possible that we are offering different value propositions to different customers; for example, a search engine is providing search results to web users and advertising spaces to companies. Then we identify how to deliver this value to our customers (channels) and how we manage our relationships with them (customer relationships). At this point we are able to describe our revenue model (revenue streams). However, to understand how to create our value propositions, we need to identify our key activities, key resources, and key partners. These three blocks allow us to identify our cost structure. For more information about this model, visit the official website[^12] or sign up for the free online course “How to Build a Startup.”[^13]

16. SWOT ANALYSIS

OBJECTIVE

Concerning a company, identify the main strengths to maintain, opportunities to exploit, threats to reduce, and weaknesses to manage.

DESCRIPTION

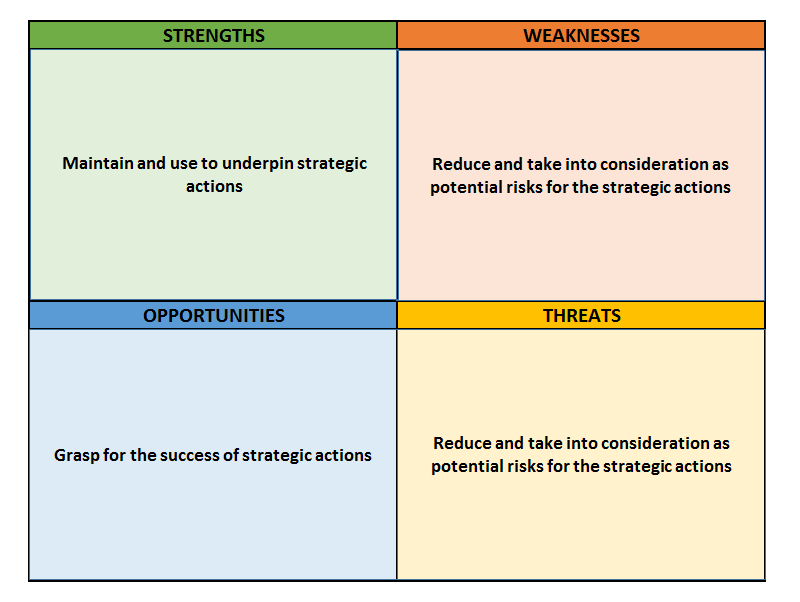

The SWOT analysis is the consolidation of internal and external analyses, and it is used for strategy definition, usually in its first stage, to establish the bases of several strategic actions:

-

External analysis: analysis of the external opportunities and threats resulting from the external environment (PEST, PESTEL); for example, the increasing use of mobile devices can be an opportunity to exploit or the increasing cost of energy can be a potential threat.

-

Internal analysis: internal strengths and weaknesses resulting from the internal analysis (MOST, resource audit, etc.) and from competitive analysis (competitive map, importance–performance matrix). For example, a well-known brand is a strength and a poor company strategy definition is a weakness.

From this matrix the analyst can define strategic actions that exploit existing opportunities, using the company’s strengths as critical success factors and reducing the risks that can be provoked by potential threats and the company’s weaknesses. Since this is a consolidation of several other analyses, the sources of data depend on previous analyses and on several gathering techniques, such as brainstorming, the Delphi method, and surveys. The consolidation process can be performed directly by the analyst, but it is usually a good practice to consolidate the results or submit the analysis to other company members, for example through workshops or think tanks.

17. ANSOFF’S MATRIX

OBJECTIVE

Define the most appropriate business strategy based on the existence of markets and products.

DESCRIPTION

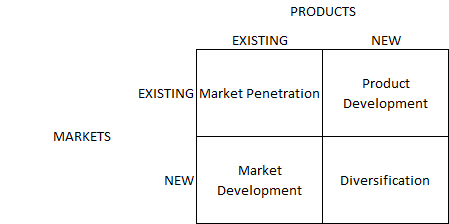

Ansoff’s matrix is usually performed after a SWOT analysis, in which strengths, weaknesses, opportunities, and threats can be transformed into business strategies:

-

Market penetration: the organization decides to use existing products in the existing market by improving tactics and strategies to push sales, for example through advertising, promotion, and pricing;

-

Product development: the organization decides to develop new products for an existing market or to add new features;

-

Market development: the organization decides to sell existing products to new markets, for example exporting to new countries;

-

Diversification: the organization decides to take a more radical approach by creating a new product for a new market. This can be the result of an opportunity caused by a new trend, identified in the SWOT analysis.

Once the strategy has been defined using Ansoff’s matrix, the objectives, strategies, and tactics can be revised in the VMOST model. The information needed for this matrix can usually be found in previous internal analysis, external analysis, and SWOT analysis.

Pricing and demand

18. Pricing and demand overview

Setting the right price is one of the most complicated decisions to take but also one of the most rewarding. To choose the price that maximizes revenues and benefits, it is necessary to determine the willingness to pay (WTP) of customers. Ideally, we should define the WTP of each customer, but in most cases it is only possible to establish the WTP for several customer segments.

We can define the WTP as the highest price that a person will accept to buy a product or service. However, it is useful to differentiate between two concepts: the maximum price and the reference price.[^14] The maximum price is the value of a reference product plus the differentiation value of the product of interest; for example, the WTP of brand B is the value of brand A plus the differentiation value between the two brands. The reference price is the highest price that a person would pay for a product or service assuming that she has no alternative. In conclusion, we can say that the WTP is either the maximum price or the reference price depending on which is the lowest. Several methods can be used for the estimation of the WTP, which can be divided into two main groups: observations and surveys.

Figure 15: Classification of Methods for WTP Estimation^14^

**

MARKET DATA

The advantage of market data is that they are easily accessible and imply no cost other than the time dedicated to the analysis. However, there are several drawbacks:

-

Usually there is not enough price variation in the data to test the necessary range of WTP;

-

We are only analyzing our customers and not the market as a whole;

-

We can just define whether an individual has a higher WTP than the selling price, and we lose information about customers who decided not to purchase the product;

-

Usually demand curves are estimated through regressions, and in this case several conditions have to be met (see 36. INTRODUCTION TO REGRESSIONS ).

EXPERIMENTS

There are two main kinds of experiments:

-

Laboratory experiments: purchase behavior is simulated in scenarios in which prices and products are changed (a main drawback is that the purchase intention can be biased, since the participants are aware of the experiment and because they are not spending their money or real money);

-

Field experiments: these are tests in which different prices and products are offered to customers for real purchase.

A general drawback of experiments is that they are quite costly.

DIRECT SURVEYS

Surveys are a faster and less expensive method for determining the WTP. Either experts or customers directly can be interviewed. In the first case, sales and marketing experts are asked to predict the WTP of customers. This method usually performs better in situations in which the number of customers is small, while it performs poorly when the customer base is large and heterogeneous. In this case it is preferable to conduct customer surveys to ask customers their WTP directly. A widely used technique is the one proposed by Van Westendorp. Although this method is easy and direct, it has several drawbacks:

-

Customers can have incentives to underestimate their WTP (to influence real product prices) or to overestimate their WTP (to show a better social status in front of researchers);

-

Even if customers try to reveal their true WTP, this is a difficult and complicated task and the response can be biased unconsciously by several factors;

-

If buyers have no market reference, they can overestimate their WTP.

**

INDIRECT SURVEYS

With indirect surveys a situation more similar to the purchase process is presented, since the respondents are asked to indicate whether they would purchase a specific product with a specific price or not. For this reason the performance tends to be better than that of direct surveys. There are two main techniques: conjoint analysis and discrete choice analysis.

Conjoint Analysis

Different products with varying attributes and price are presented to the respondents, who are asked to rank them. Since it is not possible to determine whether the respondents would buy the product at the presented price, usually they are also asked to identify a limit below which they will not buy the product. A disadvantage of this method is that the usual market prices are shown; therefore, if the respondents’ WTP is not included in these prices, the estimated WTP can be quite different from the true one.

Discrete Choice Analysis

As in conjoint analysis, products are decomposed into attributes for which the utility is calculated. However, in discrete choice analysis, the respondents have to choose a product from a set of several alternatives. The main methodological difference is that attributes’ utility is estimated at an aggregated level (the population), while in conjoint analysis it is calculated for each individual. To estimate the utility at the individual level, the results of discrete choice analysis can be processed using a hierarchical Bayes approach.

19. PRICE ELASTICITY OF DEMAND

OBJECTIVE

Determine the price sensitivity of demand.

DESCRIPTION

The price elasticity of demand is a measure that represents how much the demand will change due to a change in the price. With a price elasticity of “1,” a 5% increase in the price means that the demand will increase by 5%. However, the price elasticity of demand is usually negative, since an increase in the price is likely to produce a decrease in the demand. Elasticities of -1 or 1 are considered to be “unit elasticities,” since a variation in the price provokes a proportional variation in the demand (positive or negative). Elasticities with an absolute value less than 1 (for example 0.4 or -0.6) are considered to be inelastic, since a variation in the price results in a less than proportional variation in the demand, while elasticities larger than 1 are considered to be elastic.

To calculate the price elasticity, this formula can be applied:

PED = (%ΔQd) ÷ (%ΔP)

where the percentage change in the demand is divided by the percentage change in the price. We can either take the two nearest offered prices or calculate the elasticity by taking more distant prices. We can also calculate the elasticity using midpoints between offered prices. With a PED of -0.4, if we increase the price by 20%, we can expect the demand to decrease by 8% (-0.4 * 0.2 = -0.8). However, this calculation is less precise for large price variations. It is usually better to calculate “arch elasticities,” in which the change in the demand is calculated incrementally at each 1% price variation (in our case 20 times). With this calculation our reduction in the demand is estimated to be 7%: 1 - (1.2 ^ (-0.4)).

In addition, when calculating the PED, it is important to consider timing and inflation:

-

Timing: if our data are spread across a large period of time (i.e. several years), we must consider that the demand could be different due to a change in consumer preferences, new substitutes, and so on. The supply could also have changed, and this has an important effect on the price equilibrium with demand.

-

Inflation: we should use real prices, since for example if our price has increased by 3% but inflation is about 3%, then in practice our price has not changed.

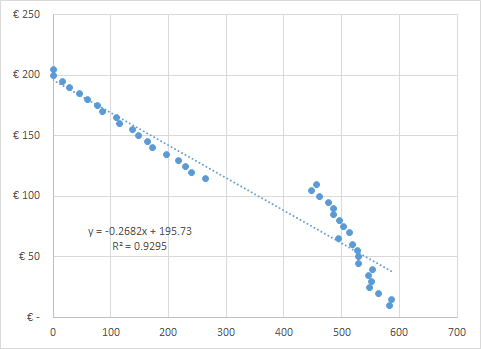

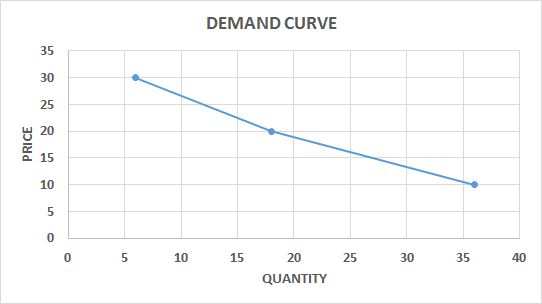

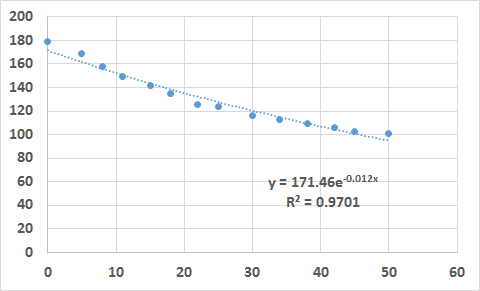

The data can be analyzed in more detail using a regression (see 38. LINEAR REGRESSION ). For example, in Figure 16 the price elasticity changes drastically at a certain price level. In other cases the data can be too scattered. In either case we should consider segmenting the data, since this can be caused by the heterogeneity of the respondents (e.g. seniors are less price sensitive while young people are far more price sensitive).

The data used for a PED calculation can be either real data from transactions or survey data. If we use transaction data, we must be able to exclude demand variations due to different factors from the price (we can for example include other predictor variables in the regression – see “Multivariate Regression” in chapter 39. OTHER REGRESSIONS ). If we use survey data, the pricing models described in the following chapters can be considered.

20. GABOR–GRANGER PRICING METHOD

OBJECTIVE

Define the optimal price range for a product or service.

DESCRIPTION

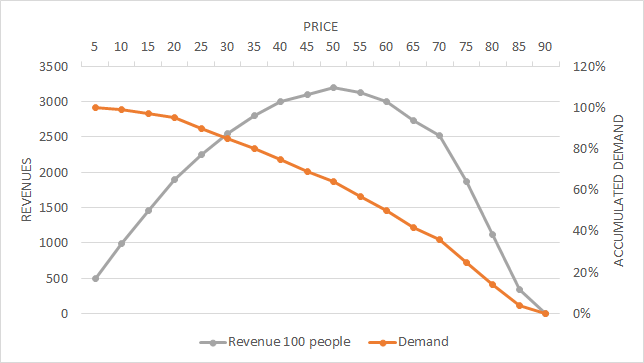

This method is useful in taking general pricing decisions. Data are collected through surveys in which each respondent is asked about his intention to purchase and shown several prices that move up or down depending on the previous answers. Alternatively, prices can be shown randomly or in a fixed series. The highest price at which a respondent reports that he would buy is considered to be his WTP. Once we have a specific price limit (WTP) for each interviewee, we can draw an accumulated demand curve.

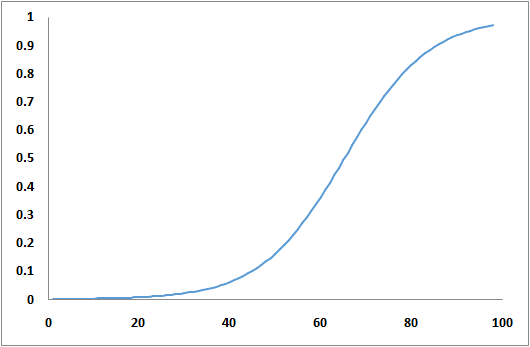

Since we have the information about demand and WTP available, we can calculate the revenue curve in the graph and establish the optimal price at which revenues are maximized (Figure 17 ).

21. VAN WESTENDORP PRICE SENSITIVITY METER

OBJECTIVE

Determine consumer price preferences.

DESCRIPTION

People are asked to define prices for a product at four levels: too cheap, cheap, expensive, and too expensive. The questions usually asked are:

-

At what price would you consider the product to be so expensive that you would not buy it? (Too expensive)

-

At what price would you consider the product to be so inexpensive that you would doubt its quality? (Too cheap)

-

At what price would you consider the product to start to be expensive enough that you could start to reconsider buying it? (Expensive)

-

At what price would you consider the product to be good value for money? (Cheap)

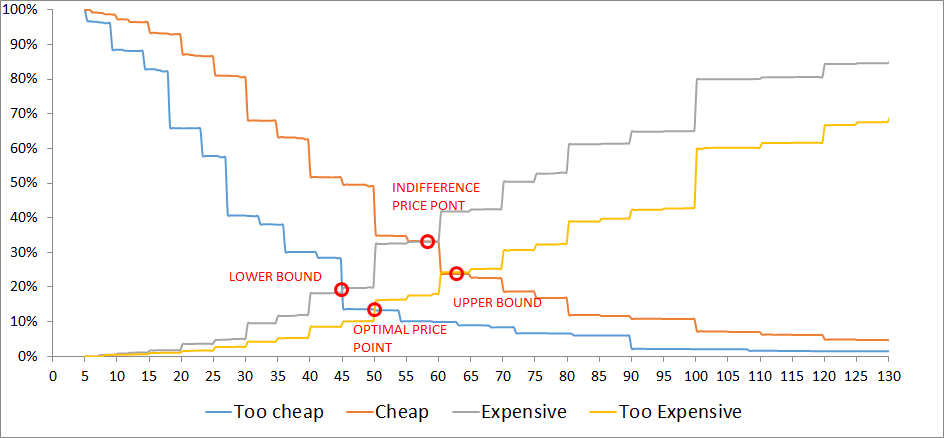

The results are organized by price level, with the accumulated demand for each question. The demand is usually accumulated inversely for the categories “cheap” and “too cheap” to define crossing points with the other two variables (Figure 18 ).

From the four intersections, we have the boundaries between which the price should be settled (lower bound and upper bound). Although the other two price points are sometimes used, I prefer to use this model to define the lead prices and upper prices for a product, while the middle prices should not be static but should change based on several factors (period of purchase, place, conditions, etc.).

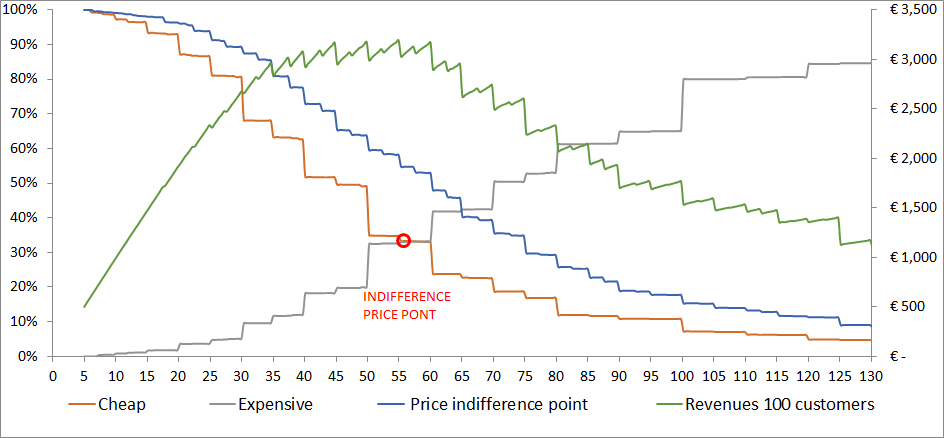

With this model we can define price boundaries, but we cannot estimate the purchase likelihood or demand. For the estimation of the demand (and revenues), we ask an additional question regarding the likelihood of buying the product at a specific price with a five-point Likert scale (5 = strongly agree, 1 = strongly disagree). The price to be tested can be the average of the “cheap” price and the “expensive” price for each respondent. A more comprehensive approach would be to ask the question for both the “cheap” and the “expensive” price. Then the results must be transformed into purchase probabilities, for example strongly agree = 70%, agree = 50%, and so on. With these results we can build a cumulative demand curve and a revenue curve (Figure 19 ). The optimal price is the one at which the revenues are maximized (be aware that this approach aims to maximize revenues and does not take into account any variable costs).

22. MONADIC PRICE TESTING

OBJECTIVE

Analyze people’s purchase intention at different price points and for alternative products.

DESCRIPTION

In monadic price testing, purchase behavior is tested for several price points, but each respondent is shown just a single price. Due to this method, a large base of respondents is necessary. A variation that needs a smaller sample is sequential monadic testing, in which the respondents are shown different price points, one at a time (usually no more than three price points are presented to each respondent). It is important to bear in mind that sequential monadic testing implies some biases and usually shows a higher purchase intention at the lower prices than monadic testing.

This is probably the best method for analyzing purchase behavior at a given price; however, it is only useful if we have an idea of the appropriate price points for a particular market. If this is not the case, we would need to obtain this information prior to the analysis, either through direct or indirect survey methods (see 18. INTRODUCTION).

Once the data have been collected, we can summarize the purchase behavior for the different price points (e.g. 11% of the market would purchase the product at €30, €32% at 20, etc.), and we can estimate a demand curve. The data are usually collected through surveys but can also be obtained from controlled experiments.

23. CONJOINT ANALYSIS

OBJECTIVE

Identify customers and potential customers’ preferences for specific attributes of a product. It can also be used to define the willingness to pay and the market share of different products.

DESCRIPTION

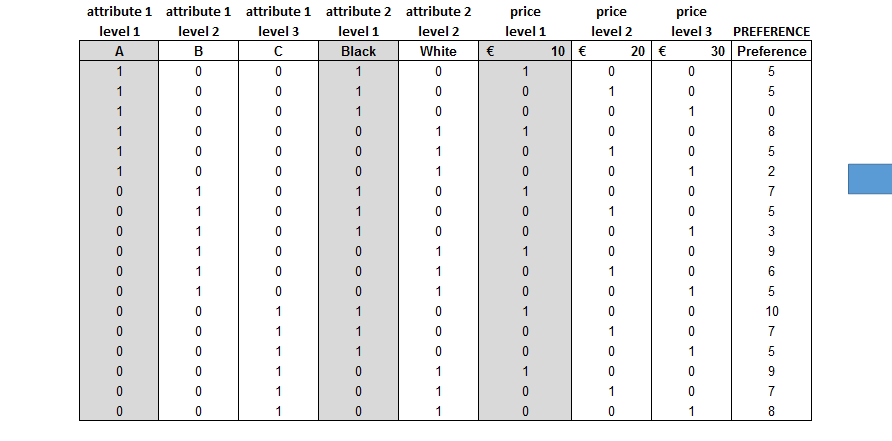

Conjoint analysis is a surveying technique used to identify the preferences of customers or prospective customers. The respondents are shown several products with varying levels of different attributes (e.g. color, performance) and are asked to rank the products. This ranking is then used to calculate the utility of each attribute and product at the individual level. The results can be used to define the best combination of attributes and price or to simulate market share variations with competitors (if competitors’ products are presented).

First of all it is very important to spend enough time designing the analysis, starting with the selection of the most important attributes and attributes’ levels.

There are three kinds of methods:

-

Decompositional methods: the respondents are presented with different product versions, they rank them, and then the utilities are calculated at the attribute level by decomposing the observations;

-

Compositional methods: the respondents are asked to rate the different attributes’ levels directly;

-

Hybrid methods: compositional methods are used in the first phase to present a limited number of product versions in the second phase (they are useful when we have a large combination of attributes and levels).

In addition to the methods described above, several kinds of adaptive conjoint analysis are used to increase the efficiency of conjoint analysis, especially when the number of attributes is large.

In conjoint analyses the price is usually included as an attribute and the price utility is calculated. However, this creates several problems:

-

By definition the price has no utility but is used in exchange for the sum of attributes’ utilities of the product;

-

The price ranges, number of levels, and perception of the respondents can bias the answers;

-

The purchase intention is not included, so we do not know whether the respondent would actually buy the product at the presented price (to avoid this problem partially, the respondents are usually asked to define a limit in the ranking below which products are not purchased).

The willingness to pay is calculated as the exchange rate between price utility and attribute utility. However, to avoid the abovementioned problems, we should consider a different approach, for example dividing the analysis into two phases:

-

Perform a classic conjoint analysis for non-price attributes to define utilities;

-

Ask for the purchase intention of full product profiles with varying prices to define the lower and upper boundaries between which the respondent would agree to purchase the product.

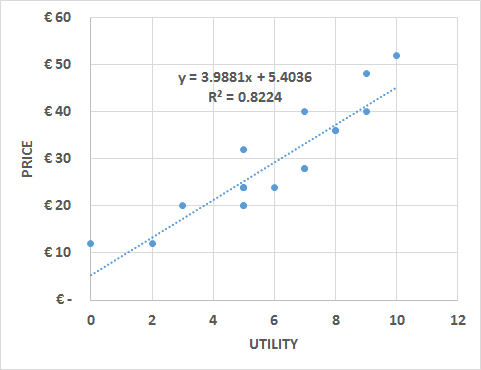

With this information a linear function can be estimated in which the price is the dependent variable and the utility is the independent variable.

In the example we present a classic conjoint analysis that includes the price as an additional attribute. It includes one three-level attribute, one two-level attribute (color), and three levels of price. Full-profile products are presented to the respondents and they are asked to give a preference on a scale from 0 to 10 (10 being the most preferred product) instead of ranking the products.

The utility of a respondent is calculated by removing one level for each attribute to perform a multiple linear regression with dummy variables. The removed variables will have a utility of “0,” while the attributes included in the regression will have the utility corresponding to the regression coefficients. After verifying the significance of each attribute (p-value < 0.05; see 38. LINEAR REGRESSION ), the coefficients can be summed to build the utility equation.

The utility equation at the individual level can be used to define the most profitable combination of attributes and price. It also allows the building of scenarios in which shifts in the market share are calculated due to changes in the price or products’ attributes compared with the products offered by competitors. Especially for the market share scenarios, it is important to define the purchase intention by asking the respondents to state a “limit” beyond which they will not purchase the product.

In the template a second sheet is presented in which the price is not included as an additional attribute but the respondents are asked about it separately, either directly or by showing them different price–product combinations and asking for their purchase intention. The last example usually performs better, but if we have numerous combinations, we cannot show all of them.

There are two main approaches when creating surveys for conjoint analysis:

-

Classic conjoint: the respondents are shown all the combinations of attributes’ levels and are asked either to rank them or to define their preferences on a certain scale (e.g. 0 to 10). If the number of combinations is too large, we should either split the combinations and present them several times to the respondents or present only a certain percentage of all the possible combinations (randomly selected). We should also ask for a “limit,” that is, the ranking position or preference level at which the respondent would change his purchase intention.

-

Conjoint in which the price is not an attribute: the process is the same as the classic conjoint analysis, but the price is not included as an attribute. After asking the respondents to rank or set their preferences concerning several combinations of attributes’ levels, they are asked whether they would purchase a specific combination at a specific price. Depending on the response, either the utility or the price is modified to identify the WTP. If the number of combinations is limited, each one can be tested; if the number is large, not all combinations can be tested and the WTP must be calculated for different levels of utility and can then be estimated for all the combinations.

24. CHOICE-BASED CONJOINT ANALYSIS

OBJECTIVE

Identify customers and potential customers’ preferences for specific attributes of a product. It can also be used to define the willingness to pay and market share of different products.

DESCRIPTION

This method is preferred to conjoint analysis because it represents a more realistic purchase situation and, in the case of having a large number of possible combinations, because it is sufficient to show only a certain number of combinations to each respondent. Then the responses are analyzed together and the utility is defined at the aggregated level (not at the individual level as in conjoint analysis).

For this method it is also very important to choose carefully the attributes (as a rule of thumb, no more than seven including the price) and the product profiles to present, that is, the combinations of attributes. Once the attributes and product profiles have been defined, choice scenarios are designed. A scenario is a combination of several products that is presented to the respondents. When defining scenarios, these recommendations should be followed:

-

A “none” choice should be included among the products presented in each purchase scenario;

-

Each scenario should not have more than 5 products;

-

Between 12 and 18 scenarios are usually presented to each respondent.

Usually, all the combinations cannot be presented in the same scenario, and a good practice is to show from two to five products in each scenario. When choosing the combination of products for each scenario, it is important that all the products are shown an equal number of times and that each product is compared equally with other alternatives.

Once the data have been collected, utilities are estimated at the aggregate level. The market share of each product can be calculated using the “share of preferences”:

-

Products’ utilities are calculated by summing all the attributes’ utilities;

-

Products’ utilities are exponentiated;

-

The market share is calculated as the product’s exponentiated utility divided by the sum of all the exponentiated utilities.

To obtain utilities at the individual level, a method called “hierarchical Bayes” is used. This method enables us to calculate a more reliable market share based on the choice of each respondent using three main techniques:

-

First choice: each respondent chooses the product that maximizes her utility (this technique is suggested for expensive products that imply a careful evaluation, such as houses and cars);

-

Share of preference: each respondent purchases a share of each product based on the share of utilities (suggested when a product is purchased several times during a certain period);

-

Randomized first choice: each respondent chooses one product with a probability proportional to its utility.

This method is also useful for predicting variations in the market share compared with competitors by creating simulations in which prices or other products’ attributes are changed. For example, we can analyze whether a discount can attract a big enough market share to compensate for the reduction in price. In this kind of simulation, we assume that competitors are not modifying both attributes and price, but in reality this could not be the case. This is why we should at least simulate several scenarios including possible competitors’ reactions. A more complex approach would be to include a game theory model (see 76. GAME THEORY MODELS ).

Choice-based conjoint is not included as a single Excel file here; use specialized software or complements. XLSTAT CBC example

25. Customer analytics overview

Unlike the other models described in this book, customer analytics analyzes, predicts, and prescribes at the very individual level. The main idea is that a company has at its disposal “customer equity,” which is the sum of the values of each customer. This value is determined by several clients’ behavior, such as the amount spent, purchase frequency, level of recommendation, and so on.

Customer analytics allows companies to segment their customer base properly and personalize products and services for each group of clients or even each individual client. Personalization enables them to retain their most valuable customers and increase customers’ value by properly implementing commercial techniques, such as upselling, cross-selling, recommendation programs, and so on.

Data at the individual level are more complicated to obtain and more complex to analyze than aggregated data. The majority of this information comes from historical market data (a company’s data concerning purchases, customers’ actions, and profiling data), but they are usually complemented with survey data.

26. RFM MODEL

OBJECTIVE

Estimate the lifetime value of a customer or group of customers.

DESCRIPTION

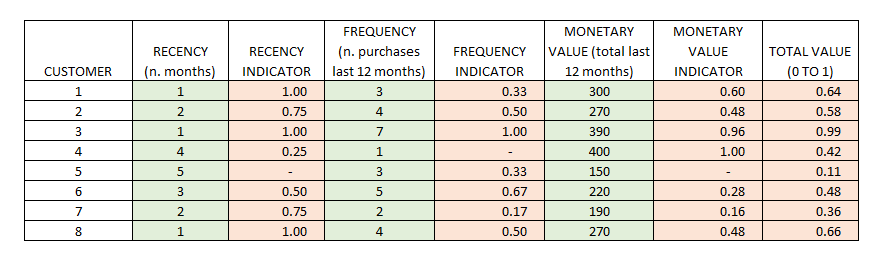

This is probably the simplest model for the estimation of customers’ value. In spite of its simplicity, it is also famous for its reliability, which is based on three variables:

-

Recency: the more recent the purchase or interaction, the more inclined the client is to accept another interaction;

-

Frequency: the more times a customer purchases, the more valuable he or she is to the company;

-

Monetary value: the total value of a customer also depends on the amount spent in a given period.

Usually, these three variables are transformed into comparable indicators (for example into a “0 to 1” indicator) and summed up to obtain a total value indicator. We can also define different weights for each indicator.

27. CUSTOMER LIFETIME VALUE 1

OBJECTIVE

Estimate the lifetime value of a customer or group of customers.

DESCRIPTION

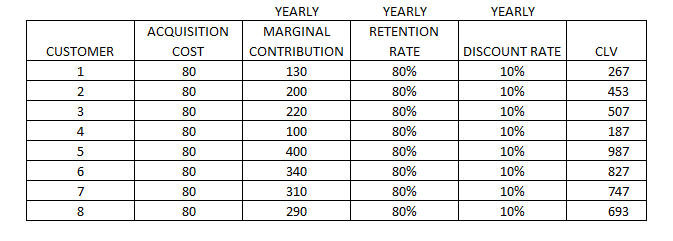

Customer lifetime value is an indicator that represents the net present value of a customer based on the estimated future revenues and costs. The main components of this calculation are:

-

Average purchase margin (revenue – costs);

-

Frequency of purchase;

-

Marketing costs;

-

Discount rate or cost of capital.

There are several ways to calculate it, and different formulas have been proposed. The most difficult part is to estimate customers’ retention (in contractual settings) or repetition and to estimate the monetary amount that a customer will spend in the future. It is important to remember that CLV is about the future and not the past, which is why using past data of a customer is not the best method for calculating CLV. A good practice is to segment customers and estimate the retention and spending patterns based on similar customers. Then, the following formula can be applied:

CLV = MC × r / (1 + d − r) − CA

CLV = customer lifetime value

MC = yearly marginal contribution, that is to say the total purchase revenue in a year minus the unit costs of production and marketing

R = retention rate (yearly)

D = discount rate

CA = cost of acquisition (one-time cost spent by the company to reach a new customer)

The discount rate can be the average cost of capital for the company or the related industry, and it is used to depreciate the value of future benefits to estimate what they are worth today.

With this formula we can estimate the CLV of a single customer or a segment of customers. In the case of estimating it at the individual level, we should use the retention rate (r) of similar customers, for example customers who buy similar products, or more sophisticated techniques, for example cluster analysis.

When we define the value of a customer or a group of customers, we can make decisions concerning the level of attention, the investment in marketing and retention costs, or the amount that we can spend (cost of acquisition) to attract customers with a similar CLV.

Even though it is quite difficult to estimate, we have to consider that the CLV formula does not take into account the value generated by referrals. Although some formulas have been proposed,[^16] this calculation is seldom used due to the lack of information. In fact, to calculate the customer referral value, we need information about the advocates and the referred customers, and for the latter we should be able to distinguish those who would have made the purchase anyway (without the referral). As a proxy we can use the NPS (see 29. NET PROMOTER SCORE^®^ (NPS^®^) ) combined with other information from surveys, such as asking whether a customer has been referred and how much the referral has affected the purchase.

The market’s historical data is the main source of information (at the individual level, usually from CRM systems), but it can be enriched with survey data, for example concerning the likelihood of repeating the purchase or recommending the product.

28. CUSTOMER LIFETIME VALUE 2

OBJECTIVE

Estimate the retention and future spending amount of customers.

DESCRIPTION

In the previous chapter I explained the principles of CLV and its calculation. However, one of the problems was the estimation of the retention rate (for which the simplification was to apply the average retention rate of similar customers) and future spending amounts (we assumed that the average spending amount of each customer will not change in the future). Despite the facility of the implementation of this approach, it can be much too simplistic and fail to estimate CLV reliably.

There are several methods for estimating retention and spending amounts, but some of them can be far too complex. The method that I will propose has a good balance between accuracy and implementation simplicity and is based on customer segmentation and probability.

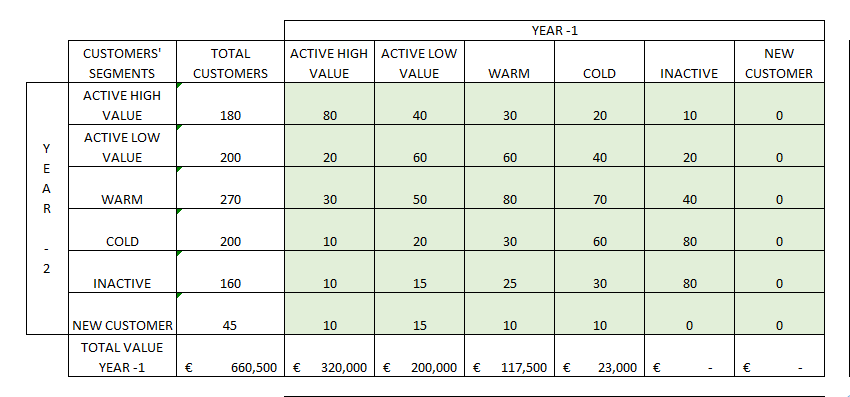

The first step is to take the customer data of year -2 and segment them based on their value and their activeness. For the value we can use the amount spent in a specific year (which is a mix of the average amount spent per purchase and the frequency of purchases), and for activeness we can use the recency of the last purchase (for example the number of days between the last purchase and the end of the analyzed year). For activeness we can also use a mix of recency and frequency. In the second step, we have to define a certain number of customer segments. The segmentation technique can be either a simple double-entry matrix or a statistical clustering technique. We can for example end up with six clusters:

-

Active high value

-

Active low value

-

Warm

-

Cold

-

Inactive

-

New customers

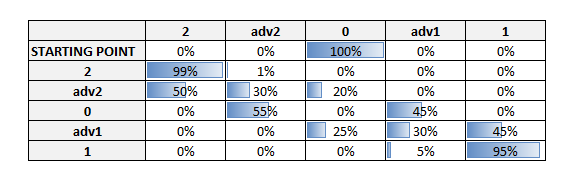

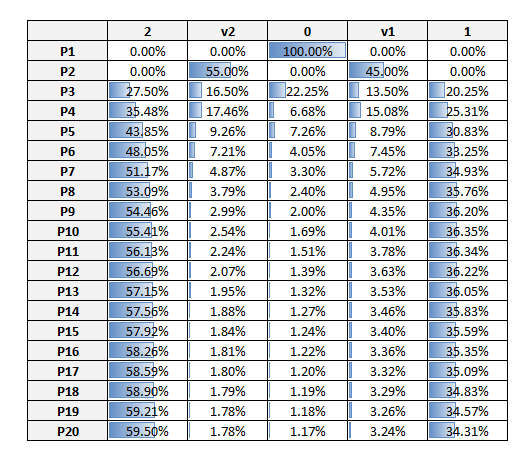

The idea behind this technique is to estimate the retention and spending amount using the probability of a customer remaining in the same segment or changing segment and by applying to this customer the average spending amount of the new segment. To calculate the probability of moving from one segment to another, it is necessary to segment the customers into year -1 and create a transition matrix (transition among different segments from year -2 to year -1) in which probabilities are calculated for each combination of segment groups.

With the probability transition matrix we can simulate how the segments will change in the future and maybe realize that we are dangerously reducing active customers in favor of inactive ones and that we need to acquire a slightly bigger number of each kind of customer to avoid a decrease in profits. In any case with this matrix we can simulate several years ahead and estimate how many customers will still be active. We can also estimate their value by multiplying the average value of each segment by the number of customers of the same segment in a specific year (year 0, year +1, year +2, etc.).

In the proposed template, I have added an estimation of new customers acquired each year to simulate the total number of customers and their value a few years ahead. However, to calculate the CLV of the current customers, this value should be set to 0 and then the total value of each year discounted by the discount rate.

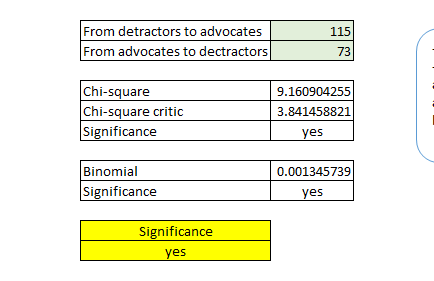

29. NET PROMOTER SCORE^®^ (NPS^®^)

OBJECTIVE

Identify customers’ likelihood of making recommendations.

DESCRIPTION

Usually the value of customers is calculated using only the variables amount spent and frequency of purchases. However, customers can create value in several other ways, one of which is by recommending the service to other potential buyers. The so-called “word-of-mouth” phenomenon is nowadays empowered by social networks, metasearch websites, or portals that provide customer feedback on products.

Positive recommendations can not only increase sales but also allow companies to save money on advertising. The Net Promoter Score® is an indicator that estimates the probability of recommendation of a group of customers based on the recommendation intention of single customers. It was developed and registered as a trademark by Fred Reichheld, Bain & Company, and Satmetrix.

The data are collected through surveys, and the respondents are asked to score from 0 to 10 the likelihood of recommending the product or service. Those who respond 9 or 10 are the real promoters, while those who respond 6 or lower are the detractors. The respondents whose score is from 7 to 8 are considered passive, since, even if they say that they would recommend the product or service, in reality they do not recommend it. The NPS® is the percentage of promoters minus the percentage of detractors.

30. CUSTOMER VALUE MATRIX

OBJECTIVE

Identify customer segments according to the value that they provide to the organization.

DESCRIPTION

A particularly interesting article published in the Harvard Business Review[^17] states that the customers who buy the most are not necessarily the most valuable customers, since, according to the authors, the most valuable customers are those who are able to bring in new valuable clients through recommendations. The referral value of customers includes not only the value generated by the new customers but also the savings on the acquisition costs (advertising, discounts, etc.). In this article both customer lifetime value and customer referral value are calculated properly, but usually these data are hardly available, mainly due to the fact that identifying those customers who made recommendations and the new customers obtained thanks to the recommendations is a complex task.

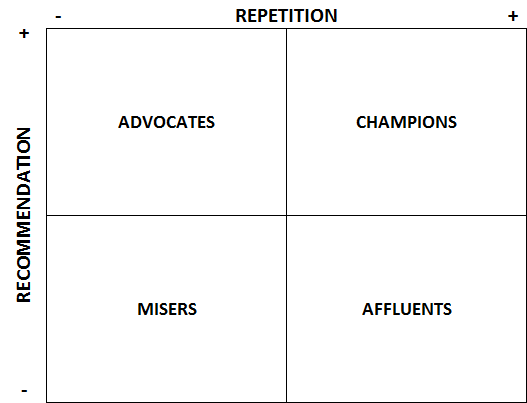

However, we can take the customer value matrix based on their conclusions and apply it to our customer data in a simpler way. For this simplification we assume that “repetition” is correlated with “lifetime value” and that the intention to recommend a product is correlated with new customers gained through advocacy. These data can be gathered through a survey in which we ask about repetition (actual or intended) and whether the customer would recommend the product. With the results of these two variables, we create a four-block matrix:

-

Champions: they are high buyers and good advocates;

-

Affluents: they are high-spending customers but do not tend to make recommendations;

-

Advocates: they are the customers who make the most recommendations but do not buy a lot;

-

Misers: they are low-value customers in both purchasing and recommending.

To assign customers to the right box, we can take the median of the two indicators. The purpose of this model is to differentiate our marketing techniques:

-

Affluents: offer them incentives in exchange for recommending products to a few friends;

-

Advocates: focus on upselling or cross-selling, for example by offering bundled products or discounts for products that they are not buying;

-

Misers: try the two abovementioned techniques to move them either to the affluents or to the advocates (or maybe to the champions).

We can also improve the model by adding a value component besides the repetition, for example the money spent on average for each purchase.

A drawback of using this simplification is that we must be able to relate the survey to a real customer to differentiate our marketing techniques. Hotels, for example, use check-out surveys and ask their customers about repetition and recommendation.

The data are gathered through online or offline surveys in such a way that the survey can be related to a customer (through email, transaction identification number, etc.).

31. CUSTOMER PROBABILITY MODEL

OBJECTIVE

Predict the long-term future behavior of customers.

DESCRIPTION

Customer behavior can be predicted by regressions or willingness-to-pay methods; however, while these methods are good at predicting actions for “period 2,” the more we try to predict further into the future, the more these methods are inaccurate. A customer probability model can help in refining the customer lifetime value calculation.

To move beyond “period 2,” we should use a probabilistic model based on pattern recognition. Quite a simple model that can be implemented in Excel is the “Buy Till You Die” model,[^18] which needs three inputs: recency, frequency, and the number of customers for each combination of the previous two variables.

This model can be applied in discrete-time settings, which means that it can be applied either to cases in which the customer can buy a product on specific purchase occasions (for example when participating in an annual conference) or to cases in which a discrete purchase interval is created (time can be “discretized” by a recording process, for example whether or not a customer went on a cruise or stayed at a specific hotel during a year).

The model proposed by Peter S. Fader and Bruce G.S. Hardie^18^ uses a log-likelihood function estimated using the Excel plug-in Solver. They explain how to build the model from scratch and provide an Excel template (see the template link below) in which in the last sheet they preset the DERTs (discounted expected residual transactions), which are “the expected future transaction stream for a customer with purchase history.”

This model uses historical transactional data of the company at the individual level. These data are usually stored in CRM systems.

Buy Till You Die template (authors)

32. SURVIVAL ANALYSIS

OBJECTIVE

Estimate the expected time for an event to occur.

DESCRIPTION

Survival analysis is used for example in medicine (the survival of patients), in engineering (the time before a component breaks), or in marketing (the customer churn rate) to estimate when an outcome will occur. When undertaking this kind of analysis, it is important to consider the concept of “censoring,” that is, the issue that values are only partially known. This is due to the fact that, at the moment of the measurement, for some individuals (or objects) the event has already occurred but for others it has not.

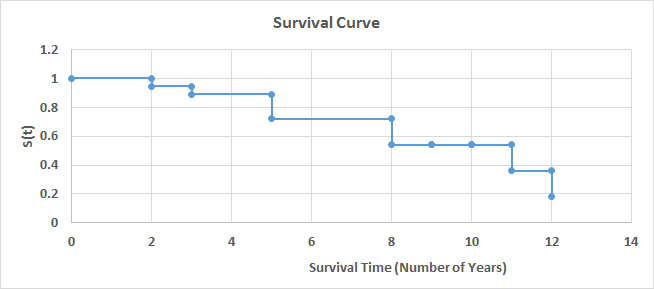

Two of the most-used techniques are the “Kaplan–Meier” method and the Cox regression. The first one is a non-parametric statistical technique that is used when we have categorical variables or the number of observations is small. The example developed in the template uses this technique to estimate the probability of a mechanical component surviving (not breaking) after a certain number of years. Figure 27 shows the survival curve chart, which depicts the survival function.

In addition to the survival curve chart, a life table can be used to analyze the results. This table contains the number of years, number of events, survival proportion, standard error, and confidence intervals. In the case in which we need to compare the survival function of two groups, we can use the log-rank test to verify whether the two curves are significantly different.

If the number of observations is large and we want to include additional explanatory variables, then we should use a Cox regression.

Survival analysis can be very useful when we need to prioritize efforts. For example, we can estimate when employees are about to leave the company using a Cox regression with several explanatory variables (years in the company, number of projects, satisfaction, performance, etc.). We can then create a matrix using the survival function on one side and performance on the other side and focus our efforts on those who present both a high performance level and a high probability of leaving.

For this kind of analysis we need a specific tracking of the “survival time” of the concerned object. We can also obtain this data in a CRM, for example concerning subscriptions and unsubscriptions of customers to a certain service.

33. SCORING MODELS

OBJECTIVE

Define the priority of action concerning customers, employees, products, and so on.

DESCRIPTION

Scoring models help to decide which elements to act on as a priority based on the score that they obtain. For example, we can create a scoring model to prevent employees leaving the company in which the score depends on both the probability of leaving and the performance (we will act first on those employees who have a higher probability of leaving and are important to the company). Scoring models are also quite useful in marketing; for example, we can score customers based on their probability of responding positively to a telemarketing call and, based on our resources, call just the first “X” customers.

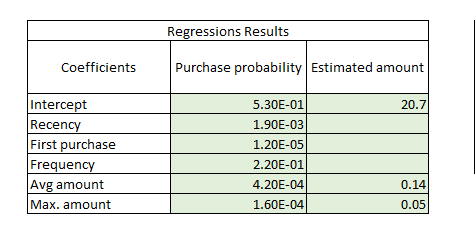

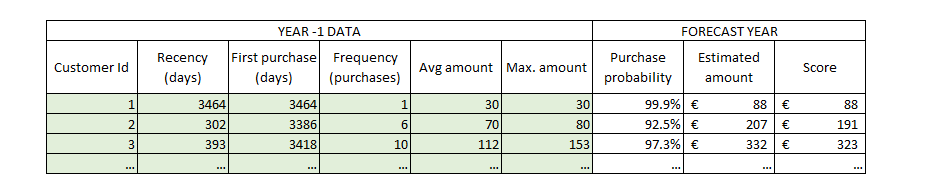

The model that I will propose concerns a scoring model of customers’ value based on the probability of purchasing a product and on the amount that they are likely to spend. This model is the result of two sub-models:

-

Purchase probability: We will use a logistic regression to estimate the purchase probability of a customer in the next period (see 26. RFM MODEL and 60. LOGISTIC REGRESSION );

-

Amount: We will use a linear regression to estimate the amount that each customer is likely to spend on his or her next purchase (see 38. LINEAR REGRESSION ).

The first step is to choose the predictor variables. In our case I suggest using recency, first purchase, frequency, average amount, and maximum amount of year -2, but we could try additional or different variables. The target variable will be a binary variable that represents whether the client made a purchase during the following period (year -1). A logistic regression is run with the eventual transformation of variables and after verifying that all the necessary assumptions are met (see 36. INTRODUCTION TO REGRESSIONS and 60. LOGISTIC REGRESSION ).

In the second part of the model, we can use for example only the average amount and the maximum amount of year -2, and the total amount spent in year -1 is used as the target variable. We run a multivariate linear regression with the eventual transformation of the variables, after verifying that all the necessary assumptions are met (see 36. INTRODUCTION TO REGRESSIONS , 38. LINEAR REGRESSION , and 39. OTHER REGRESSIONS ). It is important to note that in this regression we will not use the whole customer database but select only those customers who realized a purchase in year -1.

The last step is to put together the two regressions to score customers based on both their purchase probability and the likely amount that they will spend. We use the regression coefficients for the estimates of each customer. In the linear regression, we directly sum the intercept and multiply the variables’ coefficients (Figure 28 ) by the actual values of each customer to estimate the amount.[^19] However, in the logistic regression we should use the exponential function to calculate the real odds of purchasing:

Probability = 1 / (1 + exp(- (intercept coefficient + variable 1 coefficient * variable 1 + variable n coefficient * variable n)))

Now that we have two more columns in our database, we just need to add a third one for the final score, which will be the purchase probability times the estimated amount (Figure 29 ). With this indicator we can either rank our customers (to prioritize marketing and resource allocation for some customers) or use this indicator to estimate next-period revenues.

Statistical analysis

34. Statistical analysis overview

Several statistical analyses can be applied to data to answer different business questions. It is important to know when to apply which model and what the limitations are, but there are two important principles when performing statistical analysis:

-

Context: this means understanding the data, the goal of the model, the exactitude needed for the results, whether or not we need to use all the data set or just a portion of it, etc.

-

Segmentation: this refers to segmenting the data to fit the model better.

In the following chapters I will start by explaining how to perform a descriptive statistics analysis of a variable and then I will explain several analysis techniques, which can be grouped into three main categories:

-

Regressions: the analysis of the relationship between different variables and the creation of models in which unknown values of an outcome variable are estimated using one or more predictor variables;

-

Hypothesis testing: the analysis of the differences between groups based on one or more variables of interest;

-

Classification models: models of which the outcome is membership of a group.

Sometimes several models can be used for the same business issue, and the choice will depend on the kind of variables that we are using (categorical, ordinal, or quantitative) and their distribution.

Once the model has been chosen, the next steps are to check the conditions, carry out the analysis, check the significance and fit, validate, and refine.

An important part of statistical analysis consists of manipulating data to prepare it for the model implementation:

-

Outliers can significantly bias results, so we have three options:

-

Maintain the outlier: in the case that we verify that the value is correct;

-

Elimination: in the case that the outlier is an error;

-

Transformation: in the case that we can correct the error and replace it with the correct value;

-

-

Registers with missing data cannot be used in some statistical methods, such as regressions:

-

Leave missing values if we are using a model that is not affected by them, if the number of affected registers is small, or if we cannot replace them with appropriate values;

-

Replace missing values using a prediction method (we can use a simple average or a more complex method).

-

-

Data binning is necessary when a variable has too many categories and we need to group them, for example to analyze their distribution;

-

Variable transformation can be necessary either to meet the requirements for the implementation of a specific model or to improve the model outcome.

There is a useful website that shows step by step how to create statistical analysis models in Excel: http://blog.excelmasterseries.com/ .

35. DESCRIPTIVE STATISTICS

OBJECTIVE

Analyze the distribution of one or several variables in a data set.

DESCRIPTION

In statistical analysis the first step is to analyze the available data. This step is also useful to check for outliers or for the assumption of normality to use these data for a particular statistical model or test (see 36. INTRODUCTION TO REGRESSIONS ). Since the analysis of these assumptions is included in the chapter introducing regressions, here I will focus on the descriptive statistics that are useful for describing numeric variables:

Statistic Description

Mean Arithmetic mean of the data

Standard Error Represents the difference between the expected value and the actual value

Median Central value (the value that divides the data in two – in the case of an even number of values, the median is the mean of the two central values)

Mode Most frequent value

Standard Deviation A measure of how values are spread out. Mathematically, it is the square root of the variance

Sample Variance Average of the squared differences between each value and the mean (it is also a measure of how values are spread out)

Kurtosis A measure of the “peakedness” and flatness of the distribution. “0” means that the shape is that of a normal distribution, a flatter distribution has negative kurtosis, and a more peaked distribution has positive kurtosis

Skewness A measure of the symmetry of the distribution. “0” means that the distribution is symmetrical. If the value is negative, the distribution has a long tail on the left, and if it is positive, it has a long tail on the right. As a rule of thumb, a distribution is considered to be symmetrical if the kurtosis is between 1 and -1

Range The difference between the largest and the smallest value

Minimum The smallest value

Maximum The largest value

Sum The sum of values

Count The number of values

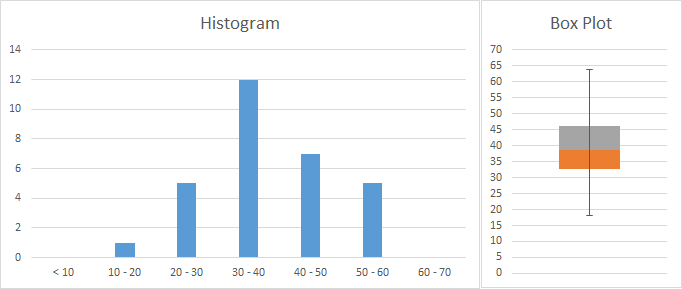

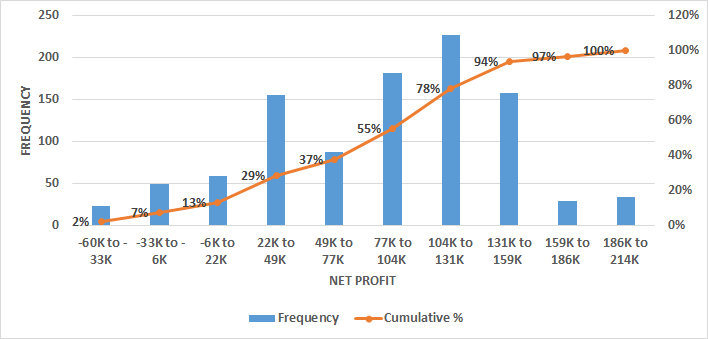

As shown in the template, these statistics can be calculated either using the Excel complement “Data Analysis” or using the Excel functions. The same is valid for creating a histogram, with which we can analyze the frequency of values and gain an idea of the type of distribution. In Figure 30 a sample including age data is represented in a histogram. On the right a box plot provides more information, dividing our data into quartiles (grouping the values into 4 groups containing 25% of the values). The plot shows that 50% of people are aged approximately between 33 and 46 years, while the rest are spread across a bigger range of ages (25% from 46 to 64 and 25% from 18 to 33).

In the template we can see how the two graphs have been created. For the histogram we need to decide which age groups we want to use and fill a table with them. Then we can use the formula “=FREQUENCY” by selecting all the cells on the right of the age groups and pressing “SHIFT + CONTROL + ENTER,” and the formula will provide the frequencies. For the box plot we have to make some calculations and perform some tricks using a normal column chart if we have an older version than Excel 2016. The template and several tutorials can be consulted on the Internet.

Finally, we may have to identify which kind of distribution our data approximate the most (for example to conduct a Monte Carlo simulation). There is no specific method, but we can start by using a histogram and comparing the shape of our data with the shapes of theoretical distributions. The following URL provides 22 Excel templates with graphs and data of different distributions: http://www.quantitativeskills.com/sisa/rojo/distribs.htm .

If our variables are categorical, we can analyze them using a frequency table (count and percentage frequencies). We can also analyze the distribution of frequencies. In the case that our variables are ordinal, we should use the same method for categorical variables (for example if the categories are the answer to a satisfaction question with ordinal answers like “very bad,” “bad,” etc.). However, in some cases we may want to analyze ordinal variables with statistics used for numerical ones (for example, if we are analyzing answers to a question about the quality of services on a scale from 1 to 10, it can be interesting to calculate the average score, range, etc.).

36. INTRODUCTION TO REGRESSIONS

Regressions are parametric models that predict a quantitative outcome (dependent variable) from one or more quantitative predictor variables (independent variable). The model to be applied depends on the kind of relationship that the variables exhibit.

Regressions take the form of equations in which “y” is the response variables that represent the outcome and “x” is the input variable, that is to say the explanatory variable. Before undertaking the analysis, it is important that several conditions are met:

-

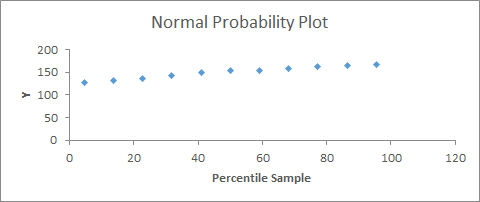

Y values must have a normal distribution: this can be analyzed with a standardized residual plot, in which most of the values should be close to 0 (in samples larger than 50, this is less important), or a probability residual plot, in which there should be an approximate straight line (Figure 31 );

-

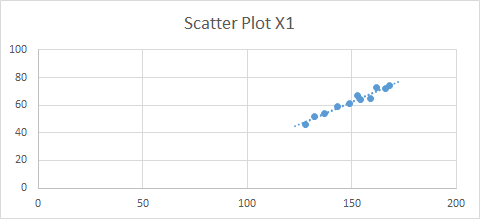

Y values must have a similar variance around each x value: we can use a best-fit line in a scatter plot (Figure 32 );

-

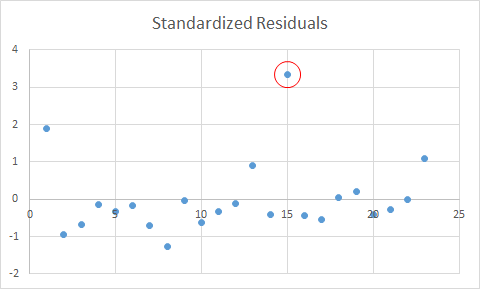

Residuals must be independent; specifically, in the residual plot (Figure 33 ), the points must be equally distributed around the 0 line and not show any pattern (randomly distributed).

If the conditions are not met, we can either transform the variables[^20] or perform a non-parametric analysis (see 47. INTRODUCTION TO NON-PARAMETRIC MODELS ).

In addition, regressions are sensitive to outliers, so it is important to deal with them properly. We can detect outliers using a standardized residual plot, in which data that fall outside +3 and -3 (standard deviations) are usually considered to be outliers. In this case we should first check whether it was a mistake in collecting the data (for example a 200-year-old person is a mistake) and eliminate the outlier from the data set or replace it (see below how to deal with missing data). If it is not a mistake, a common practice is to carry out the regression with and without the outliers and present both results or to transform the data. For example, we may apply a log transformation or a rank transformation. In any case we should be aware of the implications of these transformations.

Another problem with regressions is that records with missing data are excluded from the analysis. First of all we should understand the meaning of a missing piece of information: does it mean 0 or does it mean that the interviewee preferred not to respond? In the second case, if it is important to include this information, we can substitute the missing data with a value:

-

With central tendency measures, if we think that the responses have a normal distribution, meaning that there is no specific reason for not responding to this question, we can use the mean or median of the existing data;

-

Predict the missing values using other variables; for example, if we have some missing data for the variable “income,” maybe we can use age and profession to for the prediction.

Check the linear regression template (see 38. LINEAR REGRESSION ), which provides an example of how to generate the standardized residuals plot.

37. PEARSON CORRELATION

OBJECTIVE

Find out which quantitative variables are related to each other and define the degree of correlation between pairs of variables.

DESCRIPTION

This method estimates the Pearson correlation coefficient, which quantifies the strength and direction of the linear association of two variables. It is useful when we have several variables that may be correlated with each other and we want to select the ones with the strongest relationship. Correlation can be performed to choose the variables for a predictive linear regression.

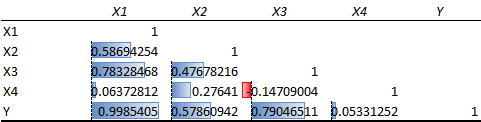

With the Excel Data Analysis complement, we can perform a correlation analysis resulting in a double-entry table with correlation coefficients (Pearson’s coefficients). We can also calculate correlations using the Excel formula “=CORREL().” The sign of the coefficient (Pearson correlation coefficient) represents the direction (if x increases then y increases = positive correlation; if x increases then y decreases = negative correlation), while the absolute value from 0 to 1 represents the strength of the correlation. Usually above 0.8 it is very strong, from 0.6 to 0.8 it is strong, and when it is lower than 0.4 there is no correlation (or it is very weak).

Figure 34 shows that there is a very strong positive correlation between X1 and Y and a strong positive correlation between X1–X3 and X3–Y. X3 and X4 have a weak negative correlation.

38. LINEAR REGRESSION

OBJECTIVE

Define the linear relationship between an input variable (x) and an outcome variable (y) to create a linear regression predictive model.

DESCRIPTION

The equation for a linear regression is:

Y = α + βx

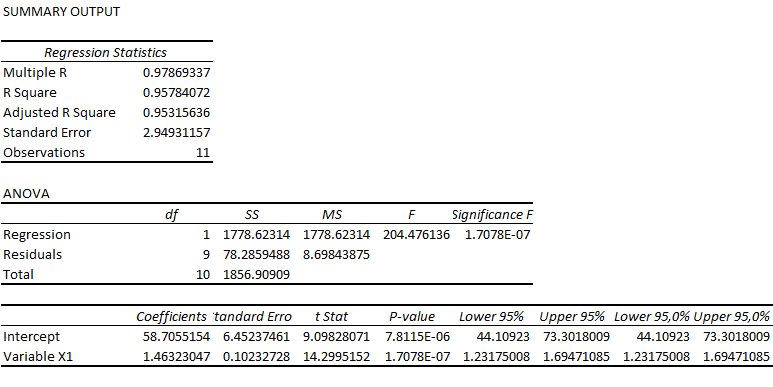

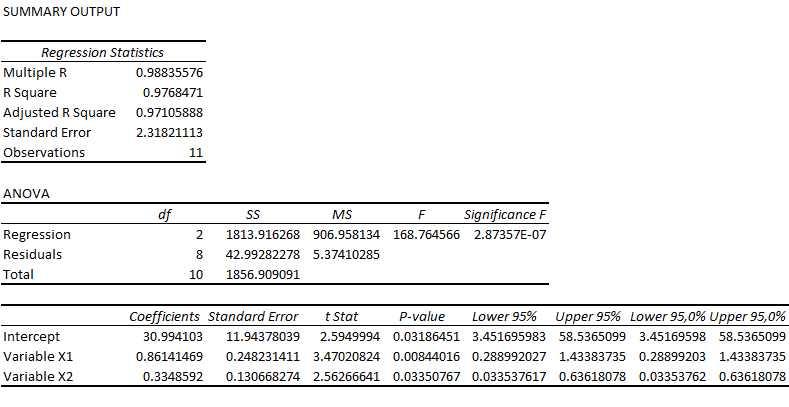

where $\ y$ is the outcome variable, α is the intercept, β is the coefficient, and x is the predictor variable. This model is used to predict values of y for x values that are not in the data set or, in general, how the outcome is affected when the input variable changes. For example, such a model can predict how much sales would increase due to an increase in advertising spending.

In a linear regression we can only use numerical variables, which are either discrete or continuous. We can include categorical variables by transforming them into dummy variables (a categorical variable with four categories is transformed into three variables of two values, 0 and 1).